The Planetary Variable Land Surface Temperature (LST) through Planet’s Subscriptions APIs offers a high-quality, reliable dataset to better model weather systems and improve decision-making. In this article, learn about the unique benefits of using the Subscriptions APIs to monitor and deliver Land Surface Temperature Planetary Variables data, walk through a typical workflow, and discover additional resources.

Land Surface Temperature in Subscriptions API¶

Land Surface Temperature provides twice-daily measurements of land surface temperature with high spatial resolution and consistency. LST data eliminates the need for maintaining large networks of physical sensors, reduces the impact of cloud cover on measurements, and enables a more accurate reflection of ground conditions across large areas. This data is generated using passive microwave data, enabling cloud-free measurements. The LST data serves diverse use cases, such as:

- enhancing weather models with consistent temperature measurements

- improving urban heat stress monitoring

- refining agricultural models

- monitoring drought conditions

Workflow: Monitoring Temperature Over an Agricultural Area¶

Setting up your script and connecting with Planet services¶

To execute the code in this example, you will need:

- A Planet API key

- Access to the LST-AMSR2_V1.0_100 product for the provided field geometry

- Configured credentials for storage of the results to cloud storage (Google Cloud Platform, Amazon Web Services, Microsoft Azure, or Oracle Collaboration Suite)

The code examples in this workflow are written for Python 3.8 or greater (python>=3.8). Aside from the built-in Python libraries, the following packages are required:

- keyring

- matplotlib

- pandas

- rasterio

- requests

- rioxarray

# Python builtins

import base64

from getpass import getpass

from io import StringIO

import json

import os

# External requirements

import keyring

import matplotlib.pyplot as plt

import pandas as pd

import rasterio

import requests

from requests.auth import HTTPBasicAuth

import rioxarray as rx

In this example, the keyring package will be used to store/retrieve your Planet API key. You are prompted to enter the key once. Then it is securely stored on the system’s keyring.

Warning

Anyone with access to this system’s user account can also access the keyring, so only enter your Planet API key on a system’s user account that only you can use.

# Authentication

update = False # Set to True if you want to update the credentials in the system's keyring

if keyring.get_password("planet", "PL_API_KEY") is None or update:

keyring.set_password("planet", "PL_API_KEY", getpass("Planet API Key: "))

else:

print("Using stored api key")

PL_API_KEY = keyring.get_password("planet", "PL_API_KEY")

Next, confirm your API key by making a call to Planet services. You should receive back a <Response [200]>.

# Planet's Subscriptions API base URL for making restFUL requests

BASE_URL = "https://api.planet.com/subscriptions/v1"

auth = HTTPBasicAuth(PL_API_KEY, '')

response = requests.get(BASE_URL, auth=auth)

print(response)

Creating a Planetary Variables Subscription with the Subscriptions API¶

To create a subscription, provide a JSON request object that details the subscription parameters, including:

- subscription name (required)

- Planetary Variable source type (required)

- data product id (required)

- subscription location in GeoJSON format (required)

- start date for the data subscription (required)

- end date for the data subscription (optional)

For further details on available parameters, see Create a Planetary Variables Subscription in Subscribing to Planetary Variables.

Create your JSON subscription description object¶

This first example creates a subscription for 5 years of 100m resolution LST data over the agricultural area of Red Cloud, Nebraska.

Subscriptions can be created with or without a delivery parameter, which specifies a storage location to deliver raster data. For this first analysis we do not require raster assets, so the delivery parameter has been omitted to create a metadata-only subscription.

To confirm if the provided geometry fits into a specific area of access (AOA) see the following code example.

# Create a new subscription JSON object

timeseries_payload = {

"name": "Red Cloud, NE - 5 years - LST-AMSR2_V1.0_100",

"source": {

"type": "land_surface_temperature",

"parameters": {

"id": "LST-AMSR2_V1.0_100",

"start_time": "2018-05-01T00:00:00Z",

"end_time": "2023-05-01T00:00:00Z",

"geometry": {

"coordinates": [[[-98.74073, 40.33321],

[-98.74073, 39.99023],

[-98.21980, 39.99023],

[-98.21980, 40.33321],

[-98.74073, 40.33321]]],

"type": "Polygon"

}

}

}

}

Create a subscription using your JSON description object¶

These details are sent to the Subscriptions API to create a new subscription and receive its unique subscription ID.

def create_subscription(subscription_payload, auth, headers):

try:

response = requests.post(BASE_URL, json=subscription_payload, auth=auth, headers=headers)

response.raise_for_status() # raises an error if the request was malformed

except requests.exceptions.HTTPError:

print(f"Request failed with {response.text}") # show the reason why the request failed

else:

response_json = response.json()

subscription_id = response_json["id"]

print(f"Successful request with {subscription_id=}")

return subscription_id

# set content type to json

headers = {'content-type': 'application/json'}

# create a subscription

timeseries_subscription_id = create_subscription(timeseries_payload, auth, headers)

print(timeseries_subscription_id)

Confirm the subscription status¶

To retrieve the status of the subscription, request the subscription endpoint with a GET request. Once it is in running or completed state, the delivery should either be in progress or completed, respectively. A subscription with an end date in the future remains in running state until the end_date is in the past. See Status descriptions for the complete overview of possible status descriptions.

While you can use the data as it becomes available to start building your analysis, running the subscription may take a while depending on factors such as the size of your AOI and the time range.

def get_subscription_status(subscription_id, auth):

subscription_url = f"{BASE_URL}/{subscription_id}"

subscription_status = requests.get(subscription_url, auth=auth).json()['status']

return subscription_status

status = get_subscription_status(timeseries_subscription_id, auth)

print(status)

Retrieving and analyzing the subscription data¶

The metadata results generated for this subscription can be retrieved directly in CSV format. Use the Pandas library to read this into a dataframe to perform further analysis and to create a time series visualization.

Data that is invalid for analysis, for example due to having limited coverage on a certain day, is filtered out of the dataframe. For more information on data validity, see Metadata Results.

# Retrieve the resulting data in CSV format

results_csv = requests.get(f"{BASE_URL}/{timeseries_subscription_id}/results?format=csv", auth=auth)

# Read CSV Data into a Pandas dataframe

csv_text = StringIO(results_csv.text)

df = pd.read_csv(csv_text, parse_dates=["item_datetime", "local_solar_time"], index_col="local_solar_time")

# Filter by valid data only

df = df[df["lst.band-1.valid_percent"].notnull()]

df = df[df["lst.band-1.valid_percent"] > 0]

df = df[df["status"] != "QUEUED"]

print(df.head())

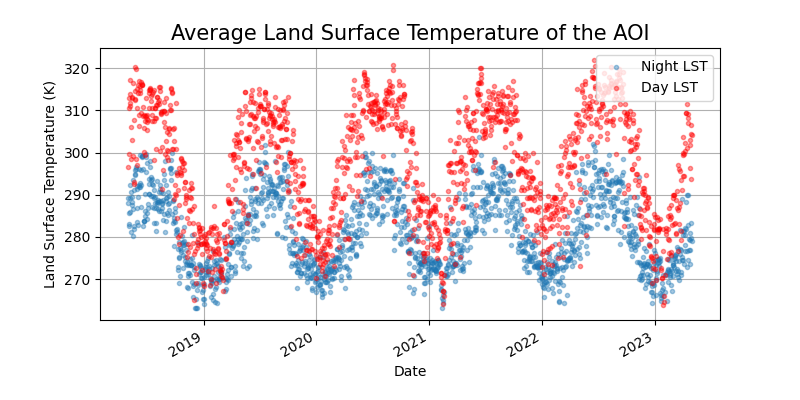

Now, you are ready to analyze the average temperature of the agricultural region of Red Cloud, NE, over time. Two time series plots are created: one for nighttime observations (captured at 01:30 solar time) and one for daytime observations (captured at 13:30 solar time).

# Plot the Land Surface Temperature time-series for nighttime observations

df.between_time("1:15", "1:45")["lst.band-1.mean"].plot(

grid=True, style=".", alpha=0.4, label="Night LST", figsize=(8, 4)

)

# Plot the Land Surface Temperature time-series for daytime observations

df.between_time("13:15", "13:45")["lst.band-1.mean"].plot(

grid=True, style="r.", alpha=0.4, label="Day LST", figsize=(8, 4)

)

# Extra information for the visualization

plt.ylabel("Land Surface Temperature (K)", size = 10)

plt.xlabel("Date", size = 10)

plt.title("Average Land Surface Temperature of the AOI", size = 15)

plt.legend()

# Display the visualization

plt.show()

Delivering results as a raster map¶

In addition to providing metadata over an Area of Interest, you can also configure the delivery of raster results directly to a cloud storage location.

The following delivery options are currently supported:

google_cloud_storageamazon_s3azure_blob_storageoracle_cloud_storage

See the supported delivery options or the API reference and open the delivery options to review the options for each delivery type. This example uses Google cloud storage.

Configuring the delivery options¶

To deliver results directly to a Google Cloud storage bucket, specify the delivery location and provide your authentication credentials.

See the Google Cloud documentation on how to create a service account key. When using AWS, Azure or OCS, use the appropriate credentials for those platforms.

BUCKET_NAME = "lst_subscriptions"

GOOGLE_APPLICATION_CREDENTIALS = "key.json" # path to the google application credentials key

if not os.path.exists(GOOGLE_APPLICATION_CREDENTIALS):

credentials_path = os.path.abspath(GOOGLE_APPLICATION_CREDENTIALS)

print(f"No google application credentials found at: {credentials_path}")

# Credentials are expected in base64 format -the following reads the json key as bytes,

# applies the base64 encoding and decodes back to a python str

with open(GOOGLE_APPLICATION_CREDENTIALS, "rb") as f:

gcs_credentials_base64 = base64.b64encode(f.read()).decode()

delivery_options = {

"type": "google_cloud_storage",

"parameters": {

"bucket": BUCKET_NAME,

"credentials": gcs_credentials_base64

}

}

Create a subscription with delivery configured¶

Now create a new subscription for three days worth of data over the Red Cloud region.

raster_payload = {

"name": "Red Cloud, NE - 3 days - LST-AMSR2_V1.0_100",

"source": {

"type": "land_surface_temperature",

"parameters": {

"id": "LST-AMSR2_V1.0_100",

"start_time": "2022-09-18T00:00:00Z",

"end_time": "2022-09-20T00:00:00Z",

"geometry": {

"coordinates": [[[-98.74073, 40.33321],

[-98.74073, 39.99023],

[-98.21980, 39.99023],

[-98.21980, 40.33321],

[-98.74073, 40.33321]]],

"type": "Polygon"

}

}

},

"delivery": delivery_options

}

raster_subscription_id = create_subscription(raster_payload, auth, headers)

print(raster_subscription_id)

Results are generated and stored in the configured cloud storage option as GeoTIFF files. The subscription status field changes to completed when all files have been delivered.

status = get_subscription_status(raster_subscription_id, auth=auth)

print(status)

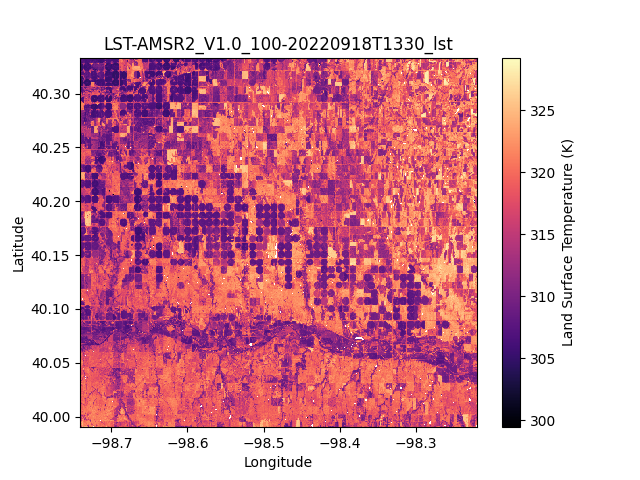

Plot the GeoTIFF¶

The rioxarray extension to rasterio can be used to open and map the delivered GeoTIFF files directly from their cloud storage location.

There are many options for configuring access through the different cloud storage services. Rasterio uses GDAL under the hood and the configuration options for network based file systems notably:

In the following example, the data is read directly from the Google Cloud Storage bucket configured previously to visualize the Land Surface Temperature 100m resolution map over Red Cloud on the 18th of September, 2022.

#

# Set the filepath to the 18 September 2022 GeoTIFF asset

lst_file_location = f"gs://{BUCKET_NAME}/{raster_subscription_id}/2022/09/18/LST-AMSR2_V1.0_100-20220918T1330_lst.tiff"

# Use the Google Application credentials to allow access to the storage location

with rasterio.env.Env(GOOGLE_APPLICATION_CREDENTIALS=GOOGLE_APPLICATION_CREDENTIALS):

lst_data = rx.open_rasterio(lst_file_location, mask_and_scale=True, band_as_variable=True)

print(lst_data)

lst_data = lst_data.rename({"x":"Longitude","y":"Latitude"})

lst_data = lst_data.rename_vars({

"band_1":"Land Surface Temperature (K)",

"band_2":"Masked LST values (K)",

})

lst_data["Land Surface Temperature (K)"].plot(cmap="magma")

plt.title("LST-AMSR2_V1.0_100-20220918T1330_lst")

plt.show()

Learning Resources

Get the details in the Land Surface Temperature Technical Specification. Get Started with Planet APIs. Find a collection of guides and tutorials on Planet University. Also checkout Planet notebooks on GitHub, such as the Subscriptions tutorials: subscriptions_api_tutorial.

Rate this guide: