RapidEye, formerly operated by Planet, is a retired constellation of five satellites that were in operation from 2009 to 2020. RapidEye images are approximately 5 meters per pixel resolution and are still available for download through Planet Archives.

Constellation and Sensor Overview¶

The RapidEye satellite constellation consisted of a single launch of five high resolution satellites.

Imagery Products¶

RapidEye Products are available for search and download via Planet’s APIs, User Interfaces, and Integrations, in the form of Scene and OrthoTile products, which are encoded in our platform as a set of Item Types and Asset Types.

Item Types¶

A RapidEye Scene Product is an individual framed scene, captured by the satellite in its line-scan of the Earth. Scenes within a strip are overlapping, and not organized to any particular tiling grid system.

RapidEye Scene Products range from 75 x 50 square kilometers to 75 x 300 square kilometers. They are represented in the Planet Platform as the REScene item type.

A RapidEye OrthoTile Product is a 25 x 25 square kilometers orthorectified and tiled product generated from a set of consecutive scenes within a strip (usually 1 or 2), based on a worldwide, fixed UTM grid system. They are represented in the Planet Platform as the REOrthoTile item type.

Asset Types¶

RapidEye Scene and OrthoTile imagery products are still available for download in the form of imagery assets. Multiple Asset Types are available for Scene and OrthoTile products, each with differences in radiometric processing, and/or rectification.

Asset Type availability varies by Item Type. You can find an overview of supported assets by Item Type here:

Basic Analytic (basic_analytic) assets are non-orthorectified, calibrated, multispectral imagery products that have been corrected for sensor artifacts and transformed to Top of Atmosphere (at-sensor) radiance. These products are designed for data science and analytic applications, and for users who wish to geometrically correct the data themselves (leveraging the associated rational polynomial coefficient Asset Type).

Analytic (analytic) assets are orthorectified, calibrated, multispectral imagery products that have been corrected for sensor artifacts and terrain distortions, and transformed to Top of Atmosphere (at-sensor) radiance. These products are designed for data science and analytic applications which require imagery with accurate geolocation and cartographic projection.

Visual (visual) assets are orthorectified and color-corrected to optimize colors seen by the human eye, providing images as they would look if viewed from the perspective of the satellite. These products are designed for simple and direct visual inspection, and can be used and ingested directly into a Geographic Information System or application.

Surface Reflectance (analytic_sr) assets are orthorectified and radiometrically corrected to ensure consistency across localized atmospheric conditions, and to minimize uncertainty in spectral response across time and location. These products are designed for temporal analysis and monitoring applications, especially in agriculture and forestry sectors.

You can find our complete Imagery Product Specification here.

Product Naming¶

The name of each acquired RapidEye image is designed to be unique and allow for easier recognition and sorting of the imagery. It includes the date and time of capture, as well as the id of the satellite that captured it, product level, and product type. The name of each downloaded image product is composed of the following elements:

RapidEye Scene

<acquisition_date>T<acquisition_time>_<satellite_id>_<productLevel>_<bandProduct>.<extension>

Example: 2018-09-29T163919_RE1_1B_band1.tif

RapidEye OrthoTile

<tileid>_<acquisition_date>_<satellite_id>_<productLevel>_<bandProduct>.<extension>

Example: 1657017_2018-09-29_RE1_3A_Analytic.tif

Processing¶

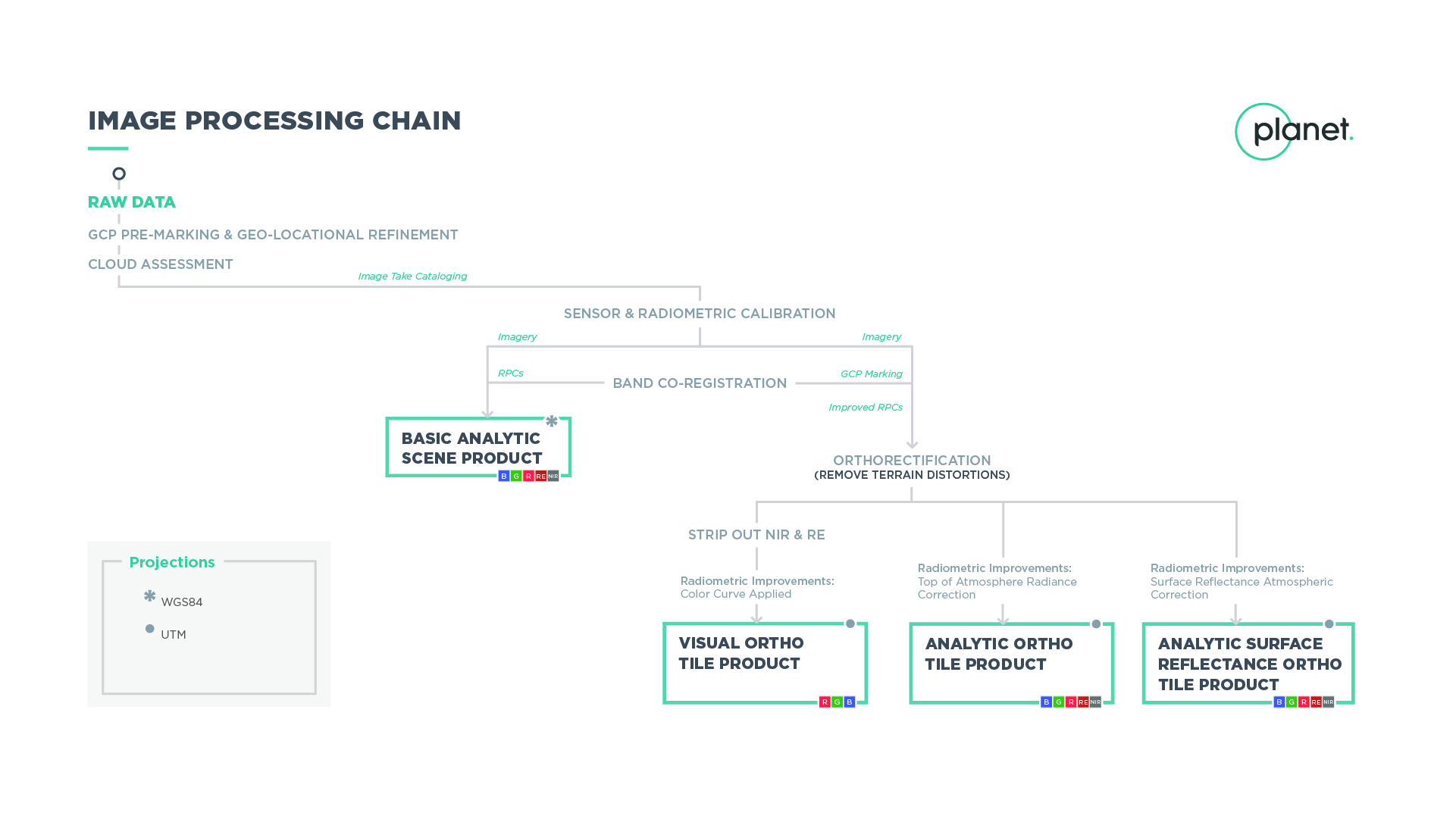

Several processing steps are applied to RapidEye imagery to produce the set of data products available for download.

Click for full-size image

Sensor & Radiometric Calibration¶

Flat Field Correction: Flat fields were collected for each optical instrument prior to launch. These fields were used to correct image lighting and CCD element effects to match the optimal response area of the sensor.

Temporal Calibration: To achieve cross calibration, corrections were applied such that all RapidEye cameras read the same DN (digital number) regardless of when the image has been taken in the mission lifetime.

Absolute Calibration: As a last step, the spatially and temporally adjusted datasets were transformed from digital number values into physical based radiance values (scaled to W/(m²strμm)*100).

Orthorectification¶

Removes terrain distortions. This process consists of two steps:

- The rectification tiedown process wherein tie points are identified across the source images and a collection of reference images (ALOS, NAIP, Landsat) and RPCs are generated.

- The actual orthorectification of the scenes using the RPCs, to remove terrain distortions. The terrain model used for the orthorectification process is derived from multiple sources (Intermap, NED, SRTM and other local elevation datasets) which are periodically updated. Snapshots of the elevation datasets used are archived (helps in identifying the DEM that was used for any given scene at any given point).

Visual Product Processing¶

Presents the imagery as natural color, optimized as seen by the human eye. This process consists of three steps:

- Nominalization - Sun angle correction, to account for differences in latitude and time of acquisition. This makes the imagery appear to look like it was acquired at the same sun angle by converting the exposure time to the nominal time (noon).

- Unsharp mask (sharpening filter) applied before the warp process.

- Custom color curve applied post warping.

Surface Reflectance Product Processing¶

Removes atmospheric effects. This process consists of three steps:

- Top of Atmosphere (TOA) reflectance calculation using coefficients supplied with the at-sensor radiance product.

- Lookup table (LUT) generation using the 6SV2.1 radiative transfer code and MODIS near-real-time data inputs.

- Conversion of TOA reflectance to surface reflectance for all combinations of selected ranges of physical conditions and for each satellite sensor type using its individual spectral response as well as estimates of the state of the atmosphere.

You can find a detailed white paper on our Surface Reflectance Products here.

Rate this guide: